Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Build Pipelines with Live Azure Data Lake Storage Data in Google Cloud Data Fusion (via CData Connect Cloud)

Use CData Connect Cloud to connect to Azure Data Lake Storage from Google Cloud Data Fusion, enabling the integration of live Azure Data Lake Storage data into the building and management of effective data pipelines.

Google Cloud Data Fusion simplifies building and managing data pipelines by offering a visual interface to connect, transform, and move data across various sources and destinations, streamlining data integration processes. When combined with CData Connect Cloud, it provides access to Azure Data Lake Storage data for building and managing ELT/ETL data pipelines. This article explains how to use CData Connect Cloud to create a live connection to Azure Data Lake Storage and how to connect and access live Azure Data Lake Storage data from the Cloud Data Fusion platform.

Configure Azure Data Lake Storage Connectivity for Cloud Data Fusion

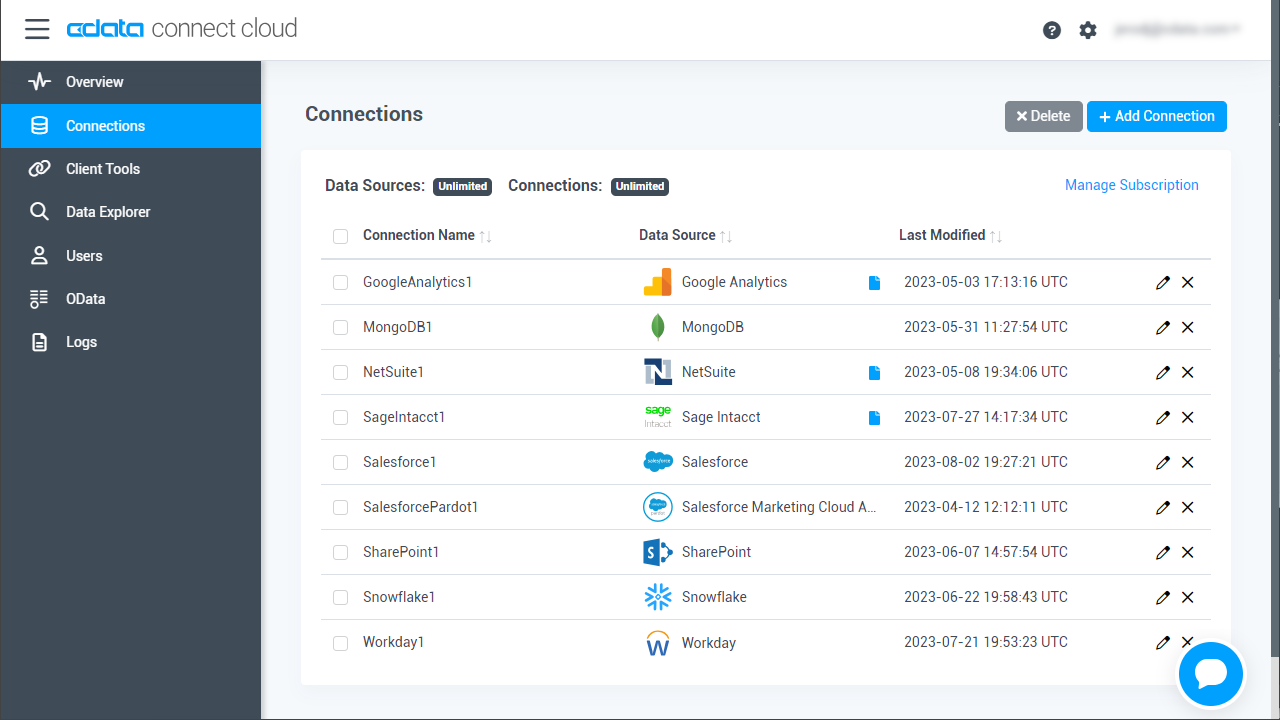

Connectivity to Azure Data Lake Storage from Cloud Data Fusion is made possible through CData Connect Cloud. To work with Azure Data Lake Storage data from Cloud Data Fusion, we start by creating and configuring a Azure Data Lake Storage connection.

- Log into Connect Cloud, click Connections and click Add Connection

![Adding a Connection]()

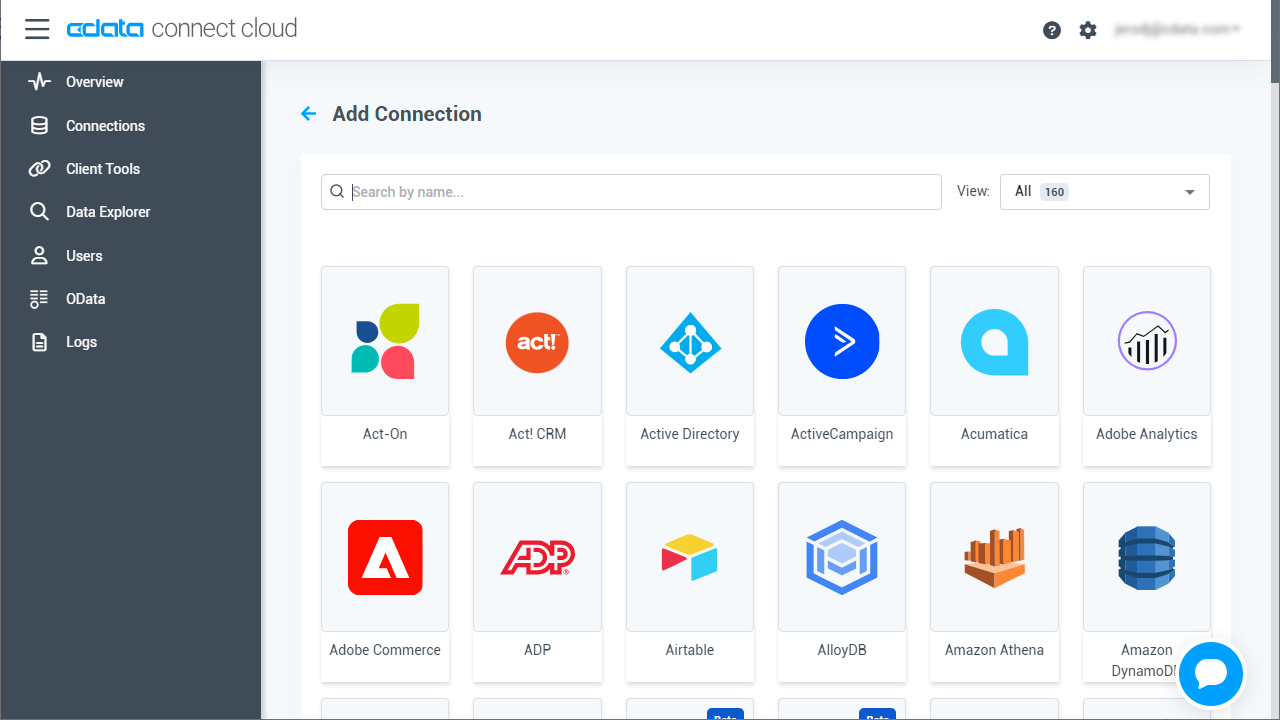

- Select "Azure Data Lake Storage" from the Add Connection panel

![Selecting a data source]()

-

Enter the necessary authentication properties to connect to Azure Data Lake Storage.

Authenticating to a Gen 1 DataLakeStore Account

Gen 1 uses OAuth 2.0 in Azure AD for authentication.

For this, an Active Directory web application is required. You can create one as follows:

To authenticate against a Gen 1 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen1.

- Account: Set this to the name of the account.

- OAuthClientId: Set this to the application Id of the app you created.

- OAuthClientSecret: Set this to the key generated for the app you created.

- TenantId: Set this to the tenant Id. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Authenticating to a Gen 2 DataLakeStore Account

To authenticate against a Gen 2 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen2.

- Account: Set this to the name of the account.

- FileSystem: Set this to the file system which will be used for this account.

- AccessKey: Set this to the access key which will be used to authenticate the calls to the API. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

![Configuring a connection (Salesforce is shown)]()

- Click Create & Test

- Navigate to the Permissions tab in the Add Azure Data Lake Storage Connection page and update the User-based permissions.

![Updating permissions]()

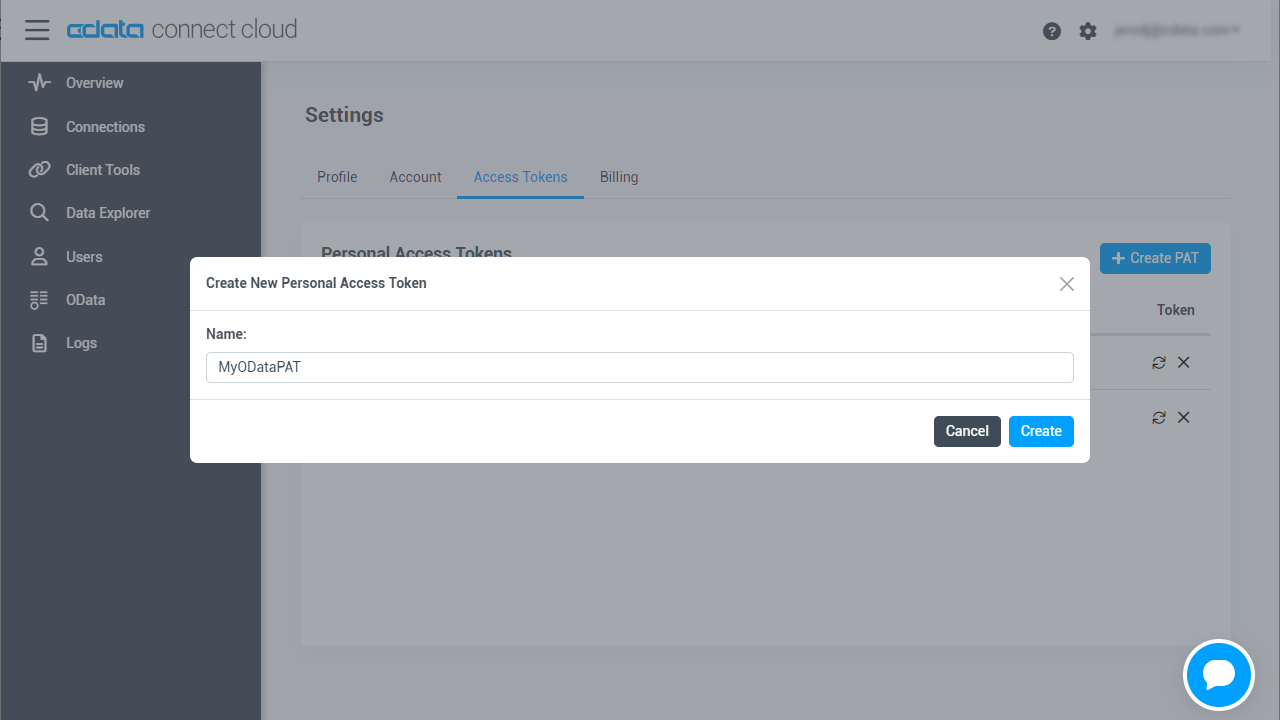

Add a Personal Access Token

If you are connecting from a service, application, platform, or framework that does not support OAuth authentication, you can create a Personal Access Token (PAT) to use for authentication. Best practices would dictate that you create a separate PAT for each service, to maintain granularity of access.

- Click on your username at the top right of the Connect Cloud app and click User Profile.

- On the User Profile page, scroll down to the Personal Access Tokens section and click Create PAT.

- Give your PAT a name and click Create.

- The personal access token is only visible at creation, so be sure to copy it and store it securely for future use.

With the connection configured, you are ready to connect to Azure Data Lake Storage data from Cloud Data Fusion.

Connecting to Azure Data Lake Storage from Cloud Data Fusion

Follow these steps to establish a connection from Cloud Data Fusion to Azure Data Lake Storage through the CData Connect Cloud JDBC driver:

- Download and install the CData Connect Cloud JDBC driver:

- Open the Client Tools page of CData Connect Cloud.

- Search for JDBC or Cloud Data Fusion.

- Click on Download and select your operating system (Mac/Windows/Linux).

- Once the download is complete, run the setup file.

- When the installation is complete, the JAR file can be found in the installation directory (inside the lib folder).

- Log into Cloud Data Fusion.

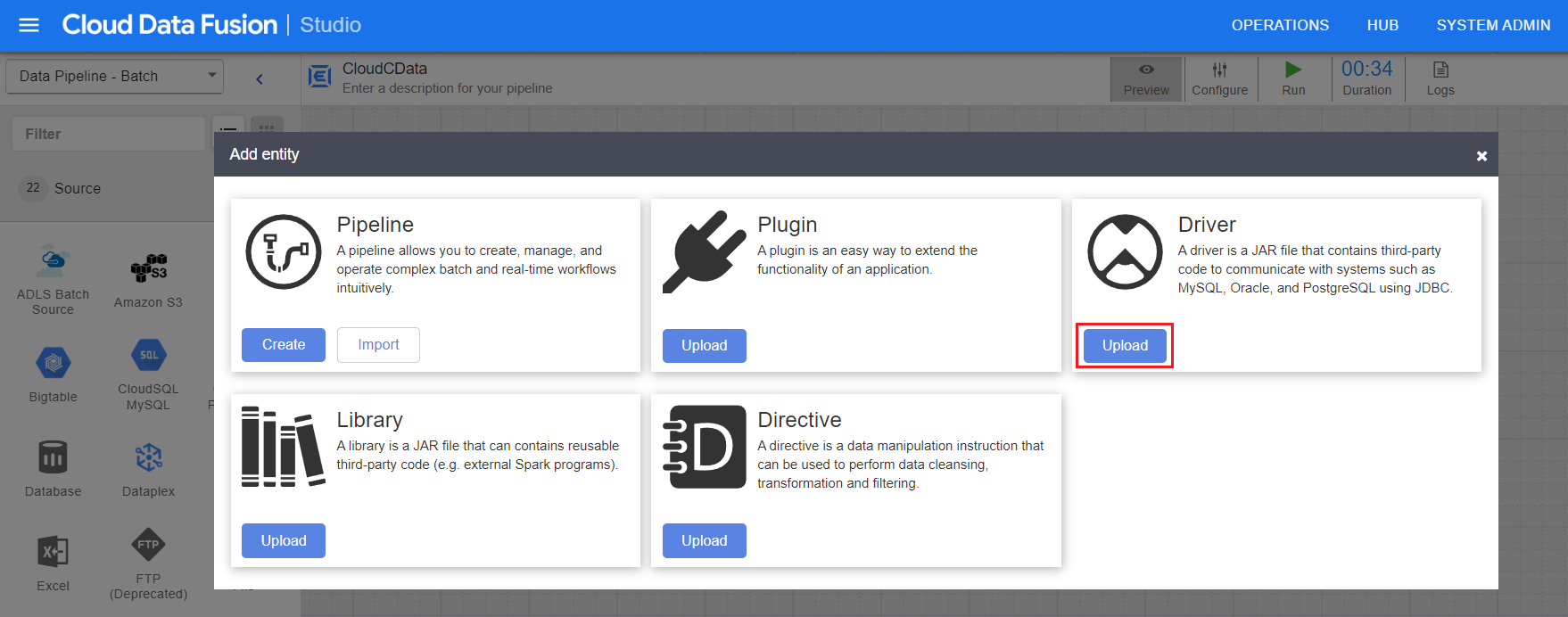

- Click the green "+" button at the top right to add an entity.

- Under Driver, click Upload.

![Upload the driver JAR file]()

- Now, upload the CData Connect Cloud JDBC driver (JAR file).

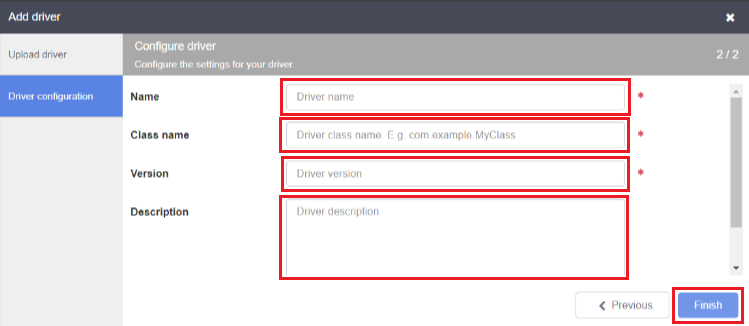

- Enter the driver settings:

- Name: Enter the name of the driver

- Class name: Enter "cdata.jdbc.connect.ConnectDriver"

- Version: Enter the driver version

- Description (optional): Enter a description for the driver

![Enter the driver settings]()

- Click on Finish.

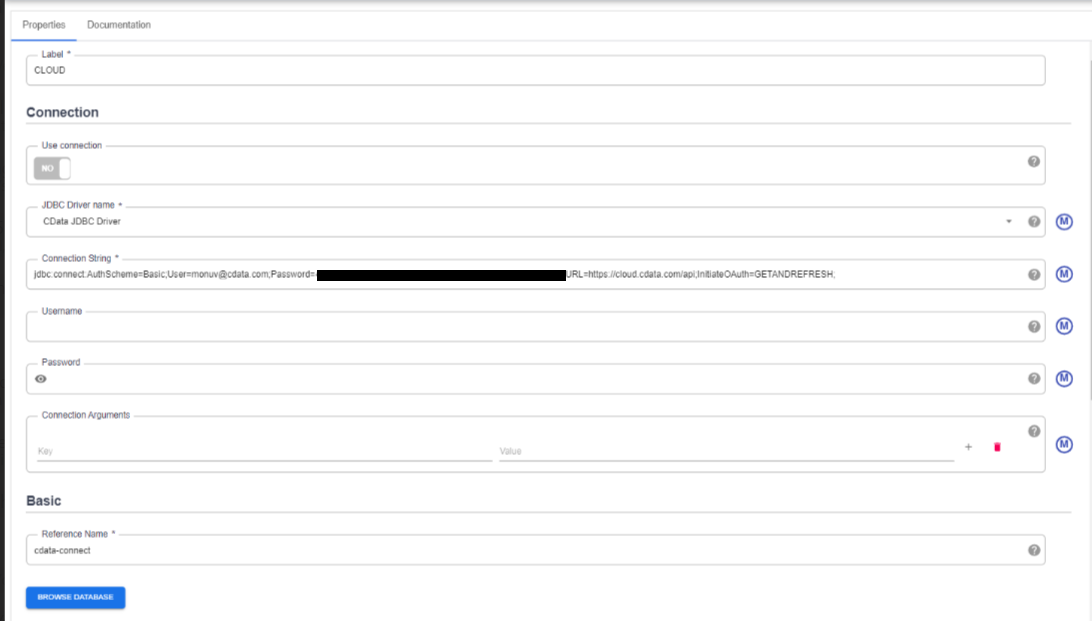

- Enter source configuration settings:

- Label: Helps to identify the connection

- JDBC driver name: Enter the JDBC driver name to identify the driver configured in Step 6.

- Connection string: Enter the JDBC connection string and include the following parameters in it:

jdbc:connect:AuthScheme=Basic;User=[User];Password=[Password]; - User: Enter your CData Connect Cloud username, displayed in the top-right corner of the CData Connect Cloud interface. For example, "[email protected]"

- Password: Enter the PAT you generated on the Settings page.

![Enter the source configuration settings]()

- Click Validate in the top right corner.

- If the connection is successful, you can manage the pipeline by editing it through the UI.

![Build and manage the pipeline in the UI]()

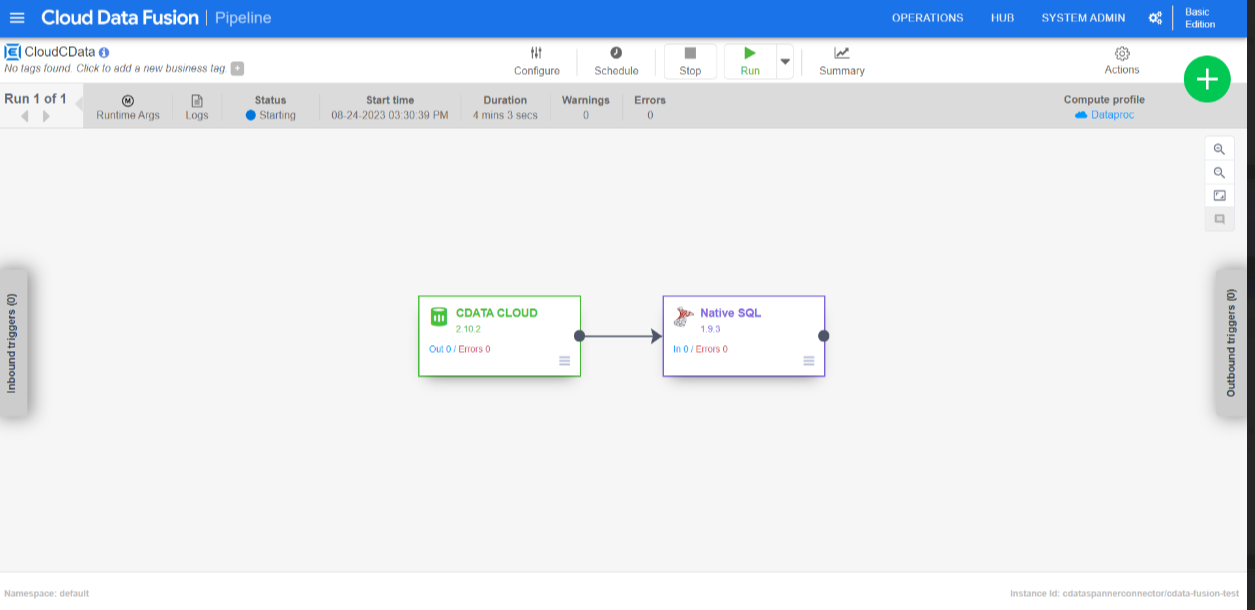

- Run the pipepline created.

![Run the pipeline]()

Troubleshooting

Please be aware that there is a known issue in Cloud Data Fusion where "int" types from source data are automatically cast as "long".

Live Access to Azure Data Lake Storage Data from Cloud Applications

Now you have a direct connection to live Azure Data Lake Storage data from from Google Cloud Data Fusion. You can create more connections to ensure a smooth movement of data across various sources and destinations, thereby streamlining data integration processes - all without replicating Azure Data Lake Storage data.

To get real-time data access to 100+ SaaS, Big Data, and NoSQL sources (including Azure Data Lake Storage) directly from your cloud applications, explore the CData Connect Cloud.