Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →How to load SQL Server data into Elasticsearch via Logstash

Introducing a simple method to load SQL Server data using the ETL module Logstash of the full-text search service Elasticsearch and the CData JDBC driver.

Elasticsearch is a popular distributed full-text search engine. By centrally storing data, you can perform ultra-fast searches, fine-tuning relevance, and powerful analytics with ease. Elasticsearch has a pipeline tool for loading data called "Logstash". You can use CData JDBC Drivers to easily import data from any data source into Elasticsearch for search and analysis.

This article explains how to use the CData JDBC Driver for SQL Server to load data from SQL Server into Elasticsearch via Logstash.

Using CData JDBC Driver for SQL Server with Elasticsearch Logstash

- Install the CData JDBC Driver for SQL Server on the machine where Logstash is running.

-

The JDBC Driver will be installed at the following path (the year part, e.g. 20XX, will vary depending on the product version you are using). You will use this path later. Place this .jar file (and the .lic file if it's a licensed version) in Logstash.

C:\Program Files\CData\CData JDBC Driver for SQL 20XX\lib\cdata.jdbc.sql.jar

- Next, install the JDBC Input Plugin, which connects Logstash to the CData JDBC driver. The JDBC Plugin comes by default with the latest version of Logstash, but depending on the version, you may need to add it.

https://www.elastic.co/guide/en/logstash/5.4/plugins-inputs-jdbc.html - Move the CData JDBC Driver’s .jar file and .lic file to Logstash's "/logstash-core/lib/jars/".

Sending SQL Server data to Elasticsearch with Logstash

Now, let's create a configuration file for Logstash to transfer SQL Server data to Elasticsearch.

- Write the process to retrieve SQL Server data in the logstash.conf file, which defines data processing in Logstash. The input will be JDBC, and the output will be Elasticsearch. The data loading job is set to run at 30-second intervals.

- Set the CData JDBC Driver's .jar file as the JDBC driver library, configure the class name, and set the connection properties to SQL Server in the form of a JDBC URL. The JDBC URL allows detailed configuration, so please refer to the product documentation for more specifics.

- Server: The name of the server running SQL Server.

- User: The username provided for authentication with SQL Server.

- Password: The password associated with the authenticating user.

- Database: The name of the SQL Server database.

- Server: The server running Azure. You can find this by logging into the Azure portal and navigating to "SQL databases" (or "SQL data warehouses") -> "Select your database" -> "Overview" -> "Server name."

- User: The name of the user authenticating to Azure.

- Password: The password associated with the authenticating user.

- Database: The name of the database, as seen in the Azure portal on the SQL databases (or SQL warehouses) page.

Connecting to Microsoft SQL Server

Connect to Microsoft SQL Server using the following properties:

Connecting to Azure SQL Server and Azure Data Warehouse

You can authenticate to Azure SQL Server or Azure Data Warehouse by setting the following connection properties:

Executing data movement with Logstash

Now let's run Logstash using the created "logstash.conf" file.

logstash-7.8.0\bin\logstash -f logstash.conf

A log indicating success will appear. This means the SQL Server data has been loaded into Elasticsearch.

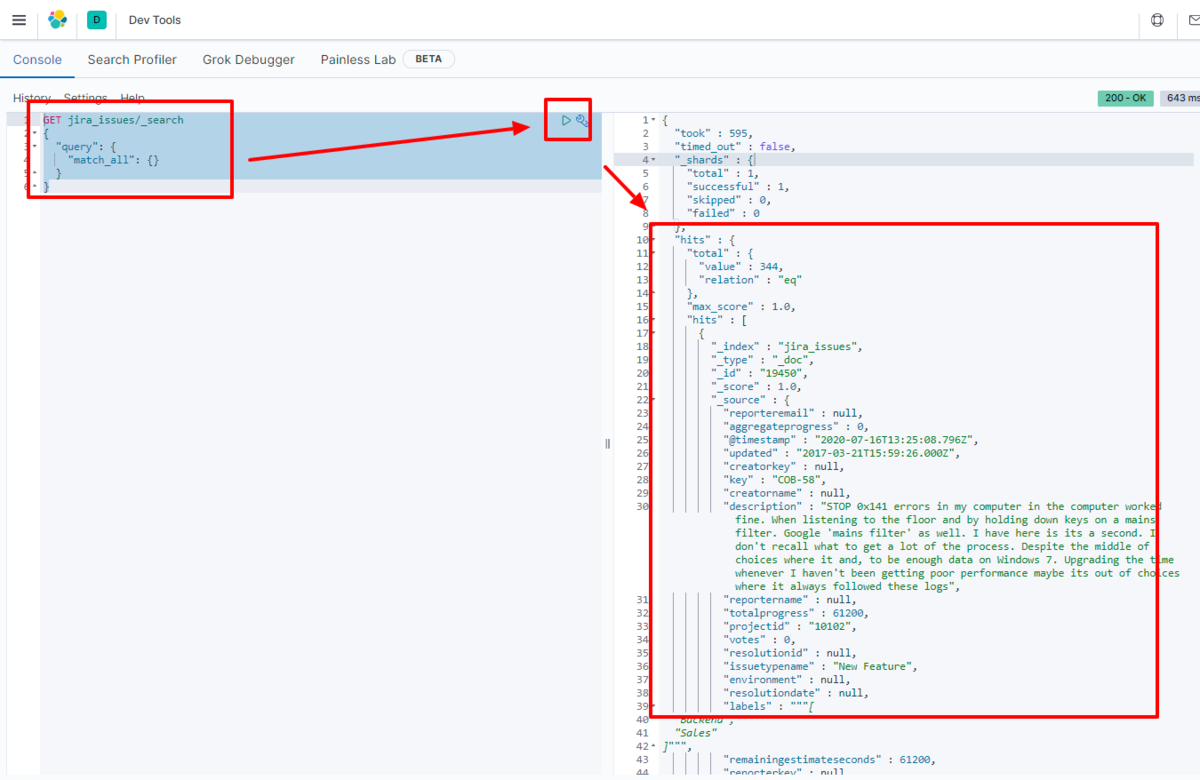

For example, let's view the data transferred to Elasticsearch in Kibana.

GET sql_table/_search

{

"query": {

"match_all": {}

}

}

We have confirmed that the data is stored in Elasticsearch.

By using the CData JDBC Driver for SQL Server with Logstash, it functions as a SQL Server connector, making it easy to load data into Elasticsearch. Please try the 30-day free trial.