Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Connect to Databricks Data in HULFT Integrate

Connect to Databricks as a JDBC data source in HULFT Integrate

HULFT Integrate is a modern data integration platform that provides a drag-and-drop user interface to create cooperation flows, data conversion, and processing so that complex data connections are easier than ever to execute. When paired with the CData JDBC Driver for Databricks, HULFT Integrate can work with live Databricks data. This article walks through connecting to Databricks and moving the data into a CSV file.

With built-in optimized data processing, the CData JDBC driver offers unmatched performance for interacting with live Databricks data. When you issue complex SQL queries to Databricks, the driver pushes supported SQL operations, like filters and aggregations, directly to Databricks and utilizes the embedded SQL engine to process unsupported operations client-side (often SQL functions and JOIN operations). Its built-in dynamic metadata querying allows you to work with and analyze Databricks data using native data types.

About Databricks Data Integration

Accessing and integrating live data from Databricks has never been easier with CData. Customers rely on CData connectivity to:

- Access all versions of Databricks from Runtime Versions 9.1 - 13.X to both the Pro and Classic Databricks SQL versions.

- Leave Databricks in their preferred environment thanks to compatibility with any hosting solution.

- Secure authenticate in a variety of ways, including personal access token, Azure Service Principal, and Azure AD.

- Upload data to Databricks using Databricks File System, Azure Blog Storage, and AWS S3 Storage.

While many customers are using CData's solutions to migrate data from different systems into their Databricks data lakehouse, several customers use our live connectivity solutions to federate connectivity between their databases and Databricks. These customers are using SQL Server Linked Servers or Polybase to get live access to Databricks from within their existing RDBMs.

Read more about common Databricks use-cases and how CData's solutions help solve data problems in our blog: What is Databricks Used For? 6 Use Cases.

Getting Started

Enable Access to Databricks

To enable access to Databricks data from HULFT Integrate projects:

- Copy the CData JDBC Driver JAR file (and license file if it exists), cdata.jdbc.databricks.jar (and cdata.jdbc.databricks.lic), to the jdbc_adapter subfolder for the Integrate Server

- Restart the HULFT Integrate Server and launch HULFT Integrate Studio

Build a Project with Access to Databricks Data

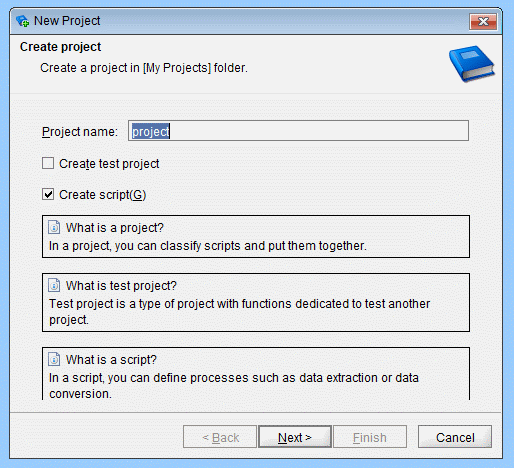

Once you copy the JAR files, you can create a project with access to Databricks data. Start by opening Integrate Studio and creating a new project.

- Name the project

- Ensure the "Create script" checkbox is checked

- Click Next

![Creating a new project.]()

- Name the script (e.g.: DatabrickstoCSV)

Once you create the project, add components to the script to copy Databricks data to a CSV file.

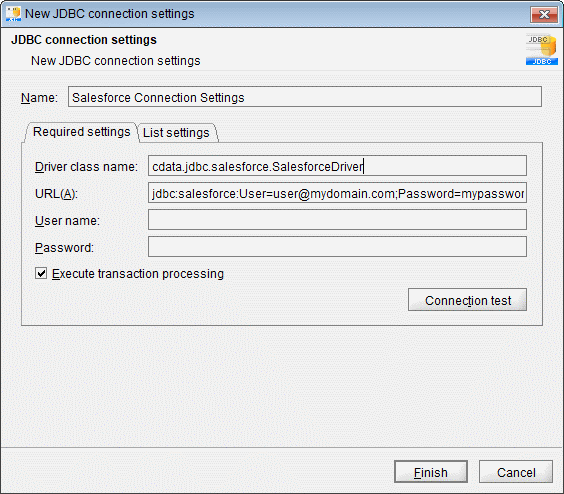

Configure an Execute Select SQL Component

Drag an "Execute Select SQL" component from the Tool Palette (Database -> JDBC) into the Script workspace.

- In the "Required settings" tab for the Destination, click "Add" to create a new connection for Databricks. Set the following properties:

- Name: Databricks Connection Settings

- Driver class name: cdata.jdbc.databricks.DatabricksDriver

- URL: jdbc:databricks:Server=127.0.0.1;Port=443;TransportMode=HTTP;HTTPPath=MyHTTPPath;UseSSL=True;User=MyUser;Password=MyPassword;

![JDBC connection settings (Salesforce is shown).]()

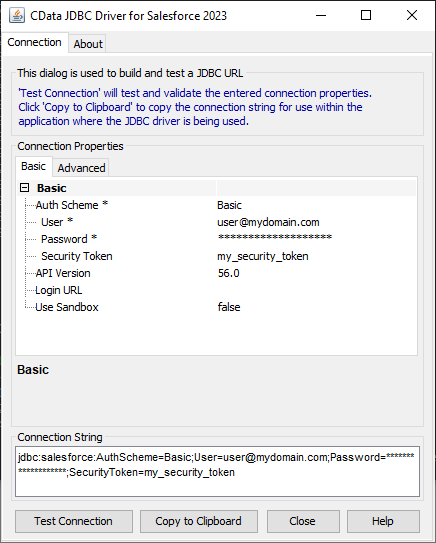

Built-in Connection String Designer

For assistance constructing the JDBC URL, use the connection string designer built into the Databricks JDBC Driver. Either double-click the JAR file or execute the JAR file from the command-line.

java -jar cdata.jdbc.databricks.jarFill in the connection properties and copy the connection string to the clipboard.

To connect to a Databricks cluster, set the properties as described below.

Note: The needed values can be found in your Databricks instance by navigating to Clusters, and selecting the desired cluster, and selecting the JDBC/ODBC tab under Advanced Options.

- Server: Set to the Server Hostname of your Databricks cluster.

- HTTPPath: Set to the HTTP Path of your Databricks cluster.

- Token: Set to your personal access token (this value can be obtained by navigating to the User Settings page of your Databricks instance and selecting the Access Tokens tab).

![Using the built-in connection string designer to generate a JDBC URL (Salesforce is shown.)]()

- Write your SQL statement. For example:

SELECT City, CompanyName FROM Customers

- Click "Extraction test" to ensure the connection and query are configured properly

- Click "Execute SQL statement and set output schema"

- Click "Finish"

![Configuring the Execute Select SQL operation]()

Configure a Write CSV File Component

Drag a "Write CSV File" component from the Tool Palette (File -> CSV) onto the workspace.

- Set a file to write the query results to (e.g. Customers.csv)

- Set "Input data" to the "Select SQL" component

- Add columns for each field selected in the SQL query

- In the "Write settings" tab, check the checkbox to "Insert column names into first row"

- Click "Finish"

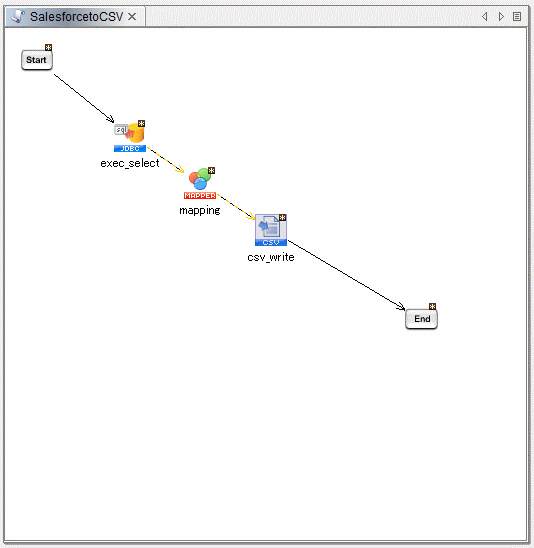

Map Databricks Fields to the CSV Columns

Map each column from the "Select" component to the corresponding column for the "CSV" component.

Finish the Script

Drag the "Start" component onto the "Select" component and the "CSV" component onto the "End" component. Build the script and run the script to move Databricks data into a CSV file.

Download a free, 30-day trial of the CData JDBC Driver for Databricks and start working with your live Databricks data in HULFT Integrate. Reach out to our Support Team if you have any questions.