Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →How to work with Amazon Athena Data in Apache Spark using SQL

Access and process Amazon Athena Data in Apache Spark using the CData JDBC Driver.

Apache Spark is a fast and general engine for large-scale data processing. When paired with the CData JDBC Driver for Amazon Athena, Spark can work with live Amazon Athena data. This article describes how to connect to and query Amazon Athena data from a Spark shell.

The CData JDBC Driver offers unmatched performance for interacting with live Amazon Athena data due to optimized data processing built into the driver. When you issue complex SQL queries to Amazon Athena, the driver pushes supported SQL operations, like filters and aggregations, directly to Amazon Athena and utilizes the embedded SQL engine to process unsupported operations (often SQL functions and JOIN operations) client-side. With built-in dynamic metadata querying, you can work with and analyze Amazon Athena data using native data types.

About Amazon Athena Data Integration

CData provides the easiest way to access and integrate live data from Amazon Athena. Customers use CData connectivity to:

- Authenticate securely using a variety of methods, including IAM credentials, access keys, and Instance Profiles, catering to diverse security needs and simplifying the authentication process.

- Streamline their setup and quickly resolve issue with detailed error messaging.

- Enhance performance and minimize strain on client resources with server-side query execution.

Users frequently integrate Athena with analytics tools like Tableau, Power BI, and Excel for in-depth analytics from their preferred tools.

To learn more about unique Amazon Athena use cases with CData, check out our blog post: https://www.cdata.com/blog/amazon-athena-use-cases.

Getting Started

Install the CData JDBC Driver for Amazon Athena

Download the CData JDBC Driver for Amazon Athena installer, unzip the package, and run the JAR file to install the driver.

Start a Spark Shell and Connect to Amazon Athena Data

- Open a terminal and start the Spark shell with the CData JDBC Driver for Amazon Athena JAR file as the jars parameter:

$ spark-shell --jars /CData/CData JDBC Driver for Amazon Athena/lib/cdata.jdbc.amazonathena.jar - With the shell running, you can connect to Amazon Athena with a JDBC URL and use the SQL Context load() function to read a table.

Authenticating to Amazon Athena

To authorize Amazon Athena requests, provide the credentials for an administrator account or for an IAM user with custom permissions: Set AccessKey to the access key Id. Set SecretKey to the secret access key.

Note: Though you can connect as the AWS account administrator, it is recommended to use IAM user credentials to access AWS services.

Obtaining the Access Key

To obtain the credentials for an IAM user, follow the steps below:

- Sign into the IAM console.

- In the navigation pane, select Users.

- To create or manage the access keys for a user, select the user and then select the Security Credentials tab.

To obtain the credentials for your AWS root account, follow the steps below:

- Sign into the AWS Management console with the credentials for your root account.

- Select your account name or number and select My Security Credentials in the menu that is displayed.

- Click Continue to Security Credentials and expand the Access Keys section to manage or create root account access keys.

Authenticating from an EC2 Instance

If you are using the CData Data Provider for Amazon Athena 2018 from an EC2 Instance and have an IAM Role assigned to the instance, you can use the IAM Role to authenticate. To do so, set UseEC2Roles to true and leave AccessKey and SecretKey empty. The CData Data Provider for Amazon Athena 2018 will automatically obtain your IAM Role credentials and authenticate with them.

Authenticating as an AWS Role

In many situations it may be preferable to use an IAM role for authentication instead of the direct security credentials of an AWS root user. An AWS role may be used instead by specifying the RoleARN. This will cause the CData Data Provider for Amazon Athena 2018 to attempt to retrieve credentials for the specified role. If you are connecting to AWS (instead of already being connected such as on an EC2 instance), you must additionally specify the AccessKey and SecretKey of an IAM user to assume the role for. Roles may not be used when specifying the AccessKey and SecretKey of an AWS root user.

Authenticating with MFA

For users and roles that require Multi-factor Authentication, specify the MFASerialNumber and MFAToken connection properties. This will cause the CData Data Provider for Amazon Athena 2018 to submit the MFA credentials in a request to retrieve temporary authentication credentials. Note that the duration of the temporary credentials may be controlled via the TemporaryTokenDuration (default 3600 seconds).

Connecting to Amazon Athena

In addition to the AccessKey and SecretKey properties, specify Database, S3StagingDirectory and Region. Set Region to the region where your Amazon Athena data is hosted. Set S3StagingDirectory to a folder in S3 where you would like to store the results of queries.

If Database is not set in the connection, the data provider connects to the default database set in Amazon Athena.

Built-in Connection String Designer

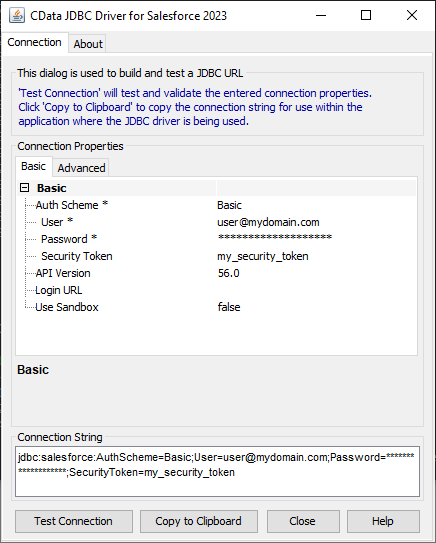

For assistance in constructing the JDBC URL, use the connection string designer built into the Amazon Athena JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.amazonathena.jarFill in the connection properties and copy the connection string to the clipboard.

![Using the built-in connection string designer to generate a JDBC URL (Salesforce is shown.)]()

Configure the connection to Amazon Athena, using the connection string generated above.

scala> val amazonathena_df = spark.sqlContext.read.format("jdbc").option("url", "jdbc:amazonathena:AWSAccessKey='a123';AWSSecretKey='s123';AWSRegion='IRELAND';Database='sampledb';S3StagingDirectory='s3://bucket/staging/';").option("dbtable","Customers").option("driver","cdata.jdbc.amazonathena.AmazonAthenaDriver").load() - Once you connect and the data is loaded you will see the table schema displayed.

Register the Amazon Athena data as a temporary table:

scala> amazonathena_df.registerTable("customers")-

Perform custom SQL queries against the Data using commands like the one below:

scala> amazonathena_df.sqlContext.sql("SELECT Name, TotalDue FROM Customers WHERE CustomerId = 12345").collect.foreach(println)You will see the results displayed in the console, similar to the following:

![Data in Apache Spark (Salesforce is shown)]()

Using the CData JDBC Driver for Amazon Athena in Apache Spark, you are able to perform fast and complex analytics on Amazon Athena data, combining the power and utility of Spark with your data. Download a free, 30 day trial of any of the 200+ CData JDBC Drivers and get started today.